Dare HEC Media Hub

High-Visibility Tasks Fuel Employee Motivation

Raphaël Lévy

In firms facing intense competition for talent, task allocation can become a lever for career growth, retention, and per…

In firms facing intense competition for talent, task allocation can become a lever for career growth, retention, and performance.

Insights You Need

AI can process everything, but it cannot care about anything. Jean-Philippe Courtois and HEC's Ilona Boniwell dive into what human leadership needs to safeguard, and why taking a …

Despite decades of programs to democratize entrepreneurship, many vulnerable groups remain underrepresented. HEC Paris research invites a rethink.

3 minutes

Isaline Rohmer

Some encounters change destinies. Keep your mind open.

Inspiring voices

94 minutes

Leaders speaking openly about their vulnerability

HEC Paris Sustainability & Organizations Institute

Stories

What the Homeless Taught Us About Dignity

HEC Paris Sustainability & Organizations Institute

Jean-Marc Semoulin: Trust as a Political Act

HEC Paris Sustainability & Organizations Institute

HEC Startups

La maison de la Conversation: Here we treat the ailment of the century!

Emma France, HEC Paris Innovation & Entrepreneurship Institute

Tom & Josette: Reinventing childcare through intergenerational micro-nurseries

HEC Paris Innovation & Entrepreneurship Institute

Cognitii: The AI Platform Tackling the Special Education Gap

HEC Paris Innovation & Entrepreneurship Institute

Wishupon: Reinventing How Users Save, Share, and Shop Online

HEC Paris Innovation & Entrepreneurship Institute

Neurobus: Bringing Frugal AI to Defense and Aerospace

HEC Paris Innovation & Entrepreneurship Institute

Veeton: Reinventing Fashion Visuals With AI

HEC Paris Innovation & Entrepreneurship Institute

Revyze: the Social App Transforming How Students Study

HEC Paris Innovation & Entrepreneurship Institute

Genomines: Producing Nickel with Plants, Not Mines

HEC Paris Innovation & Entrepreneurship Institute

Think sharper. Grasp what matters. Solve better.

Reskill Masterclass

32 minutes

How Firms Value Sales Career Paths?

Dominique Rouziès

Knowledge

What Every Family Business Board Member Needs to Know About Governance Transitions

Center for Family Business

What Every Family Business Leader Should Learn from Mathieu Lustrerie

Center for Family Business

Decoding

Enough talk. Join the doers. Make change happen.

Students POV

HEC Startups

La maison de la Conversation: Here we treat the ailment of the century!

Emma France, HEC Paris Innovation & Entrepreneurship Institute

Tom & Josette: Reinventing childcare through intergenerational micro-nurseries

HEC Paris Innovation & Entrepreneurship Institute

Cognitii: The AI Platform Tackling the Special Education Gap

HEC Paris Innovation & Entrepreneurship Institute

Wishupon: Reinventing How Users Save, Share, and Shop Online

HEC Paris Innovation & Entrepreneurship Institute

Neurobus: Bringing Frugal AI to Defense and Aerospace

HEC Paris Innovation & Entrepreneurship Institute

Veeton: Reinventing Fashion Visuals With AI

HEC Paris Innovation & Entrepreneurship Institute

Revyze: the Social App Transforming How Students Study

HEC Paris Innovation & Entrepreneurship Institute

Genomines: Producing Nickel with Plants, Not Mines

HEC Paris Innovation & Entrepreneurship Institute

Features

March 2026

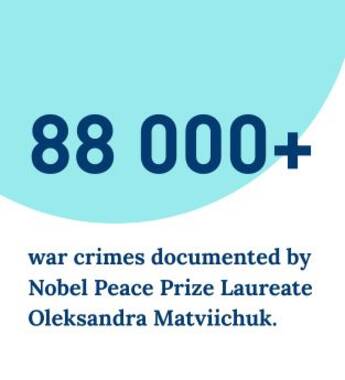

Rebuilding The Social Fabric

From economic instability, political polarization, rising individualism, mental health crises, and climate disruption, the slow erosion of social cohesion is a common fracture line.

March 2026

Trust Is the Invisible Infrastructure of Prosperous Societies

Marieke Huysentruyt, Yann Algan

Workplace Experience Determines Voting Patterns Far More than France's Political Fault Lines

Yann Algan, Antonin Bergeaud, Camille Frouard

5 mn

Is Loneliness the Most Overlooked Economic Risk of Our Time?

Marieke Huysentruyt, Yann Algan

What Debt Teaches Us About Human Exchange

The Selection By

Prof.

Brian Hill

Brian Hill is Research Director at the French National Centre for Scientific Research (CNRS) and Professor in Economics and Decision Science…

February 26th, 2026

5 minutes

Podcasts

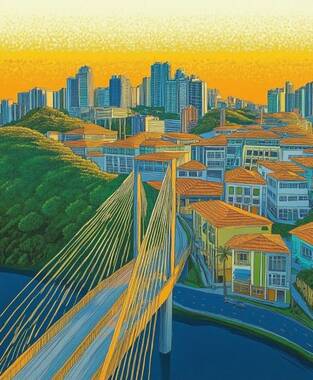

Voices Creating New Narratives and Shaping the Future of Megacities

Inside São Paulo: Building Bridges Toward Shared Prosperity

HEC Paris Sustainability & Organizations Institute

41 minutes

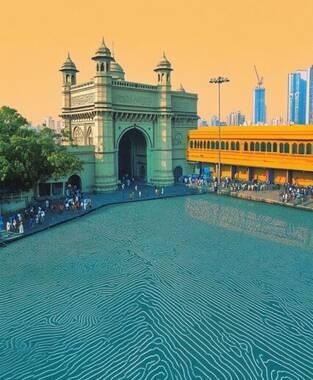

Inside Mumbai: Bridging Divides in a City of Extremes

HEC Paris Sustainability & Organizations Institute

Inside Cairo: A Layered City Where Past and Future Intersect

HEC Paris Sustainability & Organizations Institute

38 minutes

Inside Kinshasa: The Forces Powering Africa's Largest Urban Future

HEC Paris Sustainability & Organizations Institute

27 minutes

HEC researchers unpack their latest findings on real-world challenges

Have Banks Outsourced Financial Fragility?

Quirin Fleckenstein

27 minutes

How Platform Architecture and Oscar Nominations Influence Our Choices

Michelangelo Rossi

32 minutes

Videos

Videos that break down big ideas in minutes

Leading figures with ideas that matter to elevate the debate

Live masterclasses to learn new skills and grow professionally

Students’ perspectives on what matters today and what drives them

For curious minds seeking research-based insights and inspiring stories that help make sense of a world in transition, and unlock solutions within a purpose-driven community.